|

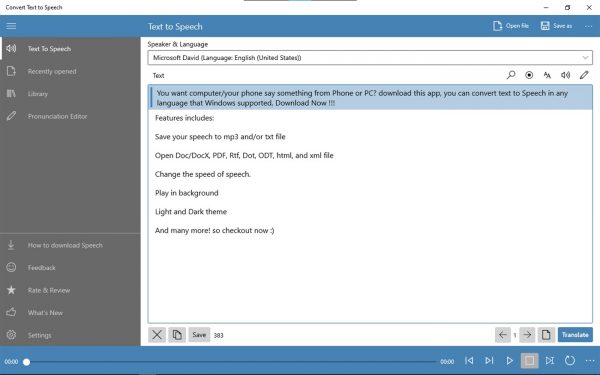

Refer to the list of supported speech to text locales. The SpeechRecognitionLanguage property expects a language-locale format string. In your code, find your SpeechConfig instance and add this line directly below it: speechConfig.SpeechRecognitionLanguage = "it-IT" The following example shows how you would change the input language to Italian. await speechRecognizer.StopContinuousRecognitionAsync() Ī common task for speech recognition is specifying the input (or source) language. Make the following call at some point to stop recognition: Var result = await speechRecognizer.RecognizeOnceAsync() Ĭonsole.WriteLine($"RECOGNIZED: Text=) Using var speechRecognizer = new SpeechRecognizer(speechConfig, audioConfig) Ĭonsole.WriteLine("Speak into your microphone.") Using var audioConfig = AudioConfig.FromDefaultMicrophoneInput() using System Īsync static Task FromMic(SpeechConfig speechConfig) Then initialize SpeechRecognizer by passing audioConfig and speechConfig. Getting APIAccess Key and Region: lucoiso/UEAzSpeech () 1009×668 43.9 KB More informations: UEAzSpeech/README.

To recognize speech by using your device microphone, create an AudioConfig instance by using FromDefaultMicrophoneInput(). When the key M is pressed, Azure will listen to your default microphone (from Windows OS settings) and print the recognized speech as a string in your screen. Regardless of whether you're performing speech recognition, speech synthesis, translation, or intent recognition, you'll always create a configuration. We have access to some pre-trained keywords deployed along with the sample, allowing us to. Sometimes referred to as a Wake-Word, this word defaults to Computer in the Hospitality Sample. With an authorization token: pass in an authorization token and the associated region/location. For the Azure Percept Audio, a Keyword is the word that the Percept DKlistens for in order to begin listening for commands.A key or authorization token is optional. With an endpoint: pass in a Speech service endpoint.You can initialize SpeechConfig in a few other ways: Var speechConfig = SpeechConfig.FromSubscription("YourSpeechKey", "YourSpeechRegion")

For more information, see Create a new Azure Cognitive Services resource.

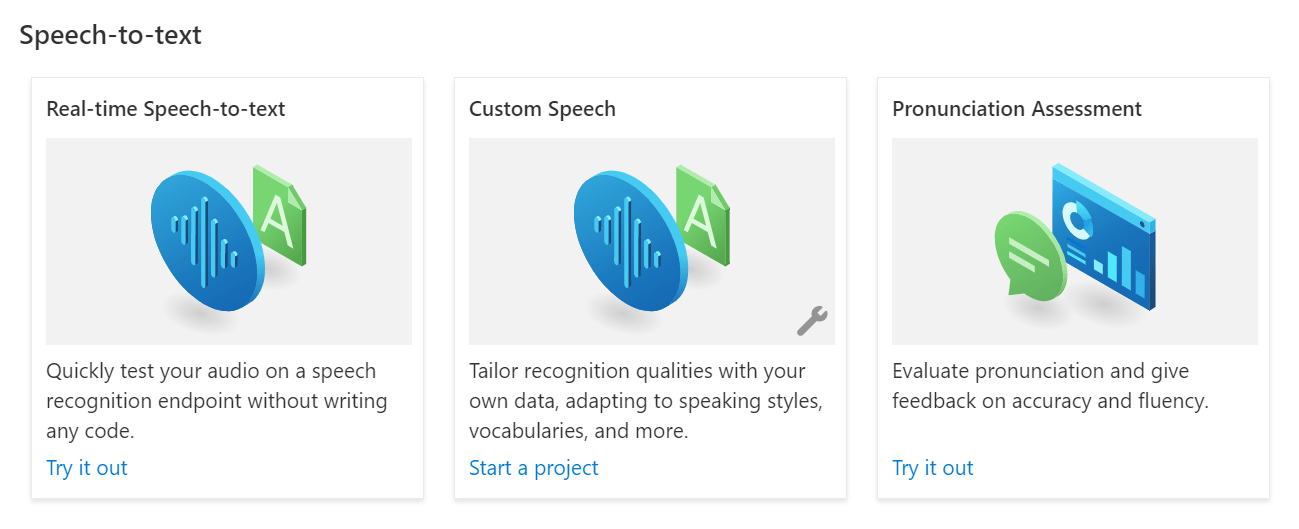

Create a Speech resource on the Azure portal. This class includes information about your subscription, like your key and associated location/region, endpoint, host, or authorization token.Ĭreate a SpeechConfig instance by using your key and location/region. To call the Speech service by using the Speech SDK, you need to create a SpeechConfig instance. In this how-to guide, you learn how to recognize and transcribe speech to text in real-time. Reference documentation | Package (NuGet) | Additional Samples on GitHub At a command prompt, run the following command.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed